Improving data safety on Linux systems using ZFS and BTRFS

Eine deutsche Übersetzung dieses Artikels wurde bei NerdZoom veröffentlicht.

The default file system for new Linux system installations is usually still ext4. It has significant advantages over older file systems, especially because it uses journaling. Journaling prevents data loss in case of a power failure or an unexpected system lockup. But ext4 is still missing many features people have come to expect from a modern file system:

- Consistent access to older file system states, e.g. for making backups without interrupting the system (snapshots)

- More than one file system hierarchy on a single volume, removing the need for partitions and eventually leading to higher storage efficiency (subvolumes)

- Online compression

- Data deduplication (data blocks with the same content are only stored once)

- Additional checksums to prevent data corruption

- Stronger coupling to the storage layer(s), so checksums can be used to make better decisions in fault situations

Adding these features to ext4 would require major code changes and changes to the on-disk data structures (the disk layout). There would also be a need for conversion strategies, and people would not be able to use their newly formatted drives on old systems. File system developers try to avoid this to some degree by using generic data structures and feature flags. Often you can decide at file system creation time which features to enable, and even enable and disable some of them later on.

I don’t care about snapshots, subvolumes, deduplication or compression, but I do care about data safety. So I really don’t want to use a file system without checksums anymore.

Why you should care about data safety

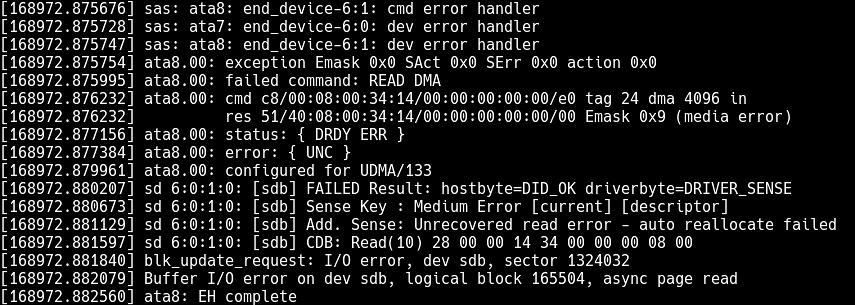

Data is usually stored on the drives in blocks (or “sectors”, as they were called in the 1980s). The drive is expected to tell the operating system when it can not read one of these blocks anymore. This happens, especially with magnetic drives. Sometimes the disc is old and cannot be magnetized enough anymore to generate the correct signals during the next read phase. Or the read head is not positioned correctly and reads the wrong sector. Or background radiation has flipped a bit. Solid state drives also suffer from wear, at some point the flash cells are no longer able to hold a charge until the next read cycle. Or the drive/controller firmware has bugs and screws up.

In practice the drives and controllers cannot be trusted and the initial assumption is not true. I’ve seen way too many cases in which a drive/controller happily returned the wrong data, and even different data on each access to the same sector. Either the drive didn’t know the data was garbage because it had no internal means to check it, or the firmware was broken. According to an old study by DeepSpar, a data recovery company, human error was the main cause for data loss in just twelve percent of the analysed cases, software errors accounted for another thirteen percent, but total drive failures (38%) and drive read instability (30%) made up the lion share. This fits in with my own experience.

I don’t expect the situation to improve. Hardware failure rates will not decrease considerably, but software errors seem to get worse. I’ve never had to update a magnetic drive’s firmware in all of my career. But with SSDs this has become so common there are even GUI firmware update tools for normal users. So you really have to expect your drive to silently corrupt your data at some point. Which is bad for every single one of us. Drives are also getting larger, get used longer, and migrating data takes longer and longer.

Silent data corruption even defeats backups to some more reliable server or cloud provider. If your data is corrupted on the client, it will start to overwrite the backup with faulty data, and you will only find out when it’s way too late. This also affects all transfers between devices, e.g. using flaky USB drives. The data you store on them with the first device might not be the data the second device will read from it later.

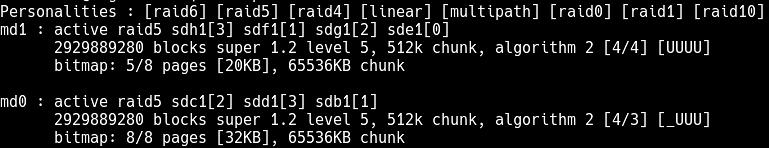

Even RAID doesn’t help

People expect RAID arrays to keep their data safe, but almost all implentations don’t. RAID 0 has no redundancy to begin with. If the drive loses a block, the data is gone. But also RAID 1/5/6, which use duplication or parities to increase redundancy, have a huge problem: everybody sacrifices data safety for performance. The redundant copies or the parities are simply not checked until a drive reports a read failure, otherwise the whole array would – at best – just be as fast as a single drive.

Over the last twenty years I’ve only seen one hardware RAID controller which could be forced into checking parities at every read. Software implementations like Linux md-raid and LVM can also not be forced to do so. All you can do is to schedule regular parity scans of the full array, but if the data gets silently corrupted between two scans you’ve been working with it for a while. Congratulations.

In the case of RAID 1, which simply stores copies of the data two drives, it gets even worse. If you were to read both copies at all times, which no-one does, and they’re not the same, which of the copies do you trust? There’s no automatic solution here. So most implementations (including Linux md-raid) toss a coin and trust the corrupted block 50% of the time. RAID 1 without additional precautions is worthless. Which is very ironic, since so many people entrust their two-drive RAID 1 NAS boxes with their backups.

How checksums protect your data

The standard solution to all these problems is calculating a checksum over every data block and storing it separately. Every time a block is read, the file system code checks if the checksum still matches the data. If it doesn’t, the drive is lying. Enterprise drives can store checksums in a separate space by using slightly larger on-disk blocks of 520 or 4104 bytes in size. The additional eight bytes can be accessed by the operating system using a feature called T10 Protection Information (T10-PI). On consumer drives the file system has to store the checksums with the rest of the data, slightly reducing the available space.

This comes in handy in all kinds of situations. Even if you have just one drive, you now get to know immediately when the drive is acting up before something bad happens. The operating system signals read errors to the applications instead of passing corrupt data. The backup software doesn’t overwrite the good backup. You don’t end up with corrupted data on the second system when transferring data using that flaky USB drive.

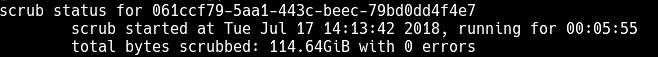

Checksums also solve most of the mentioned RAID problems. In a RAID 1, you are now able to find out which of the two drives is lying and can choose the good copy. You can build fast, safe RAID 5/6 because now you get to know when the data has been corrupted and can then reconstruct it from the parities. You can even try to overwrite the corrupted data with the good copy again, something called “resilvering”. Often the drive will remap the block to a good one and doesn’t have to be replaced immediately.

Why ZFS or BTRFS?

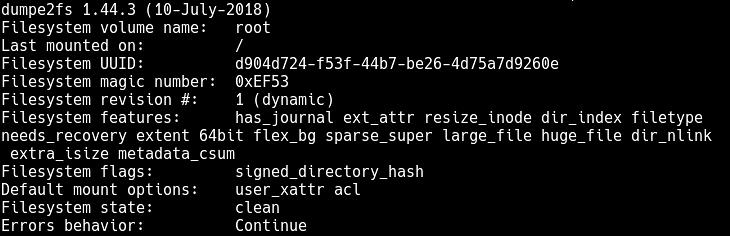

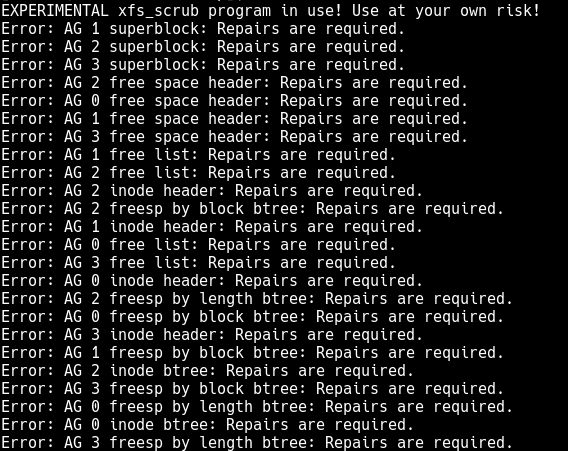

Both ext4 and XFS were supposed to get checksums for both metadata and data blocks. xfsprogs have enabled metadata checksums by default for all new XFS file systems as of version 3.2.3 (released in May 2015). e2fsprogs didn’t make metadata_csums the default before version 1.44.0 (released in March 2018). Older Linux distributions usually don’t even ship a sufficiently necessary version of e2fsprogs, so the feature goes unused pretty much all of the time. The experimental xfs_scrub command can perform an online check of an XFS file system, for ext4 there’s no online file system check.

Ext4 and most other file systems are also just very loosely coupled to the storage layer. For example they don’t know they’re running on a RAID array, they just see a single volume. Even if checksums were fully added and enabled, all the RAID tricks mentioned above couldn’t be pulled off. So neither XFS nor ext4 are good solutions.

Sun Microsystems invented ZFS as a modern file system with lots of features, among them checksumming for all data. It has its own storage layer, its own RAID level implementations and can perform all checks while the file system is mounted. It was open-sourced as part of OpenSolaris, but cannot be assimilated into the Linux kernel due to licensing constraints. Ubuntu is the only Linux distribution which ships a ready-made ZFSonLinux kernel module, with all other distribution things are more complicated. I recommend using ZFS if you can, but even I only use it in my Ubuntu-based NAS. It is also rather complicated to set up and was not designed for removable devices, making it less attractive for many use cases.

BTRFS has many of the same features, including checksums and its own RAID level implementations, but is part of the standard Linux kernel and licensed under the GPL. It works out of the box on most Linux installations and has done so for many years. In contrast to ZFS it supports external drives easily, just plug them in and mount them using your favourite GUI tool. Sadly BTRFS has some major problems with potential data loss in RAID5/6 modes, so these modes should not be used. It is adviseable to have a look at the BTRFS Wiki to check if your use case is fully supported. I’ve been using it in RAID 1 and RAID10 mode for scratch arrays and all external USB drives for about two years without problems. To my knowledge Synology is the only manufacturer out there which offers BTRFS on their NAS systems, but I did not check their exact implementation.

Btrfs is also used by default by openSUSE, even if only for /, while XFS is used for / home, as I am openSUSE user I can say I have never had problems with these FS, on my workstation where I have a lot of data BTRFS also for / home, with the possibility of having snapshots. In my opinion it is an excellent solution, never had problems.

I decided to try BTRFS on a recent small server build, and it was fine at first, until all of a sudden, it wasn’t. After a clean reboot, one of 2 BTRFS volumes would no longer mount, and as far as I could figure, there was no way to repair it. The documentation suggested such useful options as contacting the developers on IRC to have them produce a fix tailored to my specific problem. I could have recovered the data from the volume, fortunately it was only backups so I chose to recreate them instead, but there was no way to get the volume back online.

Sure, it protected me from hypothetical bitrot but I’d rather do checksummed backups of an EXT4 volume than risk my data spontaneously disappearing when I reboot.

Sure, ZFS has its advantages. It does however, require a minimum amount of disk storage to work properly, 500 gig if I’m not mistaken. A similar filesystem, found exclusively on DragonflyBSD, addresses the same issues ZFS & OpenZFS does, but uses the Hammer filesystem. Any ZFS filesystem works well handling zetabytes of data whereas Hammer handles management of petabytes of data. Hammer is a filesystem that shouldn’t be overlooked merely because not as many people never heard about it.

Where do the 500 GB come from? The minimum disk size for ZFS is 128 Megabytes.

The problem with HAMMER is simply the “found exclusively on DragonflyBSD” part. DragonflyBSD doesn’t have a market share neither with desktop users (insufficient hardware compatibility) nor enterprise users (no paid support contracts).

I haven’t tried it yet, but I understand Alt-F firmware on D-Link NAS (e.g. DNS-323, DNS-321) supports both ZFS and BTRFS.

Anyone have experience with this?