The Guardian Project’s “Proof Mode” app for activists doesn’t work

On February 24, 2017 The Guardian Project (not to be confused with the newspaper) presented “Proof Mode”, an app for Android smartphones which promises to add cryptographic “proof” to ever image and video file recorded by the device as to prove the material is authentic and hasn’t been tampered with. Sadly, none of the promises have proven to be true to me.

When I read the announcement for the first time, I was already quite sceptic because of the quite bold claims:

(..) While it is often enough to use the visual pixels you capture to create awareness or pressure on an issue, sometimes you want those pixels to actually be treated as evidence. This means, you want people to trust what they see, to know it hasn’t been tampered with, and to believe that it came from the time, place and person you say it came from. Enter, ProofMode, a light, minimal “reboot” of our more heavyweight, verified media app, CameraV. Our aim was to create a lightweight (< 3MB!), almost invisible utility (minimal battery impact!), that you can run all of the time on your phone (no annoying notifications or popups), that automatically adds extra digital proof data to all photos and videos you take. This data can then be easily shared, when you really need it, through a “Share Proof” share action, to anyone you choose over email or a messaging app, or uploaded to a cloud service or reporting platform.

Creating proof for something is not easy, after all, especially when the data source is a personal device which can easily be exposed to huge amounts of tampering. Creating a proof hard enough to be treated as “evidence” is incredibly hard in many jurisdictions. But let me summarise what this app wants to accomplish first:

- Prove that the data was recorded on the given device

- Prove that it hasn’t been tampered with

- Prove that it came from the person who claims to have recorded it

- Prove that it was recorded at the claimed time

- Prove that it was recorded at the claimed location

Before I try to come up with all the reasons why I don’t think this is possible, let us have a look at how the app is supposed to work.

How it’s supposed to work

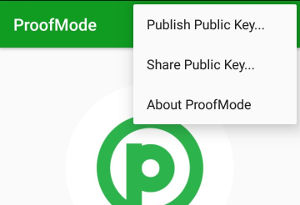

When you run Proof Mode for the first time, it generates a PGP key pair in the background. The user interface is very simple:

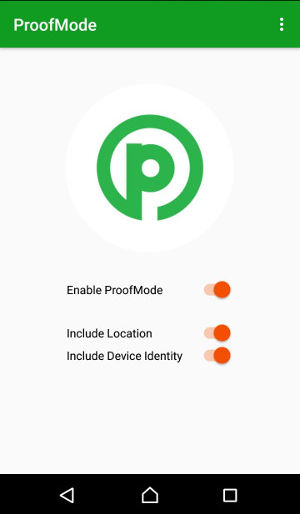

When you enable the “Enable ProofMode” switch, a background service is started and automatically signs all media (pictures and video) recorded with the built-in camera(s). There is no need to enter passwords or manually encrypt or ign anything, everything happens automatically in the background. Before you record anything, you should probably send your private key to your friends and the media though, so they can verify your proofs later. This can be accomplished using the menu:

This will open the default Android “Share” overlay and let you send the public key to other people, e.g. using e-mail, instant messengers and such. Your friends would then import your public key into their key rings, for example using GnuPG:

$ gpg --import public.key gpg: key 2EA40444A0E68E8B: public key "noone@proofmode.witness.org" imported gpg: Total number processed: 1 gpg: imported: 1

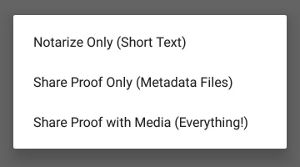

Now you go and record some incriminating footage using any standard camera app. When you want to prove authenticity to someone, you just go to the Gallery, navigate to the content in question, press the “Share” icon, select “Share Proof”, and Proof Mode pops up, offering three options:

“Notarize Only” will just share the following text:

IMG_20170307_143742.jpg was last modified at 7 Mar 2017 13:37:44 GMT and has a SHA-256 hash of 1702a09738a5c2fa75bc6f0b71d0cf60c0e5f2ebd55c0bb3a6fc1652c95a0801 This proof is signed by PGP key 0x9317e5a6373430241c97df9f2ea40444a0e68e8b

This isn’t a proof in itself, it just states that a file called IMG_20170307_143742.jpg with the given SHA-256 hash was signed at the current time by the given key. According to the original CameraV app documentation, “This provides a way to timestamp the media with a third-party, and ensure that any tampering or modification of the media can be later detected”.

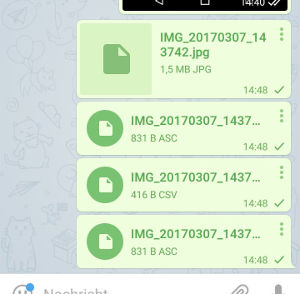

“Share Proof” has two sub-options, the only difference being that one only shares the metadata and signature, while the other also shares the original media file. In my case I’m going to share everything and send it to myself via Telegram, and this is what I end up with:

The first file is the original picture I took earlier, the IMG_20170307_143742.jpg.proof.csv file contains metadata and additional sensor data gathered from the phone to make the proof more robust. Here’s an example for my file:

CurrentDateTime0GMT,NetworkType,File,Language,Wifi MAC,Hardware,Manufacturer,Modified,DataType,Locale,SHA256,IPV4,ScreenSize,DeviceID,Network, <redacted>,Wifi,/storage/emulated/0/DCIM/Camera/IMG_20170307_143742.jpg,Deutsch,<redacted>,Sony E6553,Sony,<redacted>,Mobile Data,DEU,1702a09738a5c2fa75bc6f0b71d0cf60c0e5f2ebd55c0bb3a6fc1652c95a0801,172.23.202.60,4.856379625316121,<redacted>,Wifi,

To sum it up, this file contains:

- Name, SHA256 hash and modification timestamp of the original file

- The current time in GMT

- Current type of data network, network name, IPv4 address

- If enabled: Device ID, WiFi MAC, location data (latitude, longitude, location provider used, accuracy, altitude, bearing, speed, time)

- Device manufacturer and screen size

- Language and locale

The additional two files with the .asc suffix are the PGP signature files. Let’s check my signatures:

$ gpg --verify IMG_20170307_143742.jpg.asc gpg: assuming signed data in 'IMG_20170307_143742.jpg' gpg: Signature made Tue Mar 7 14:37:55 2017 CET gpg: using RSA key 2EA40444A0E68E8B gpg: Good signature from "noone@proofmode.witness.org" [unknown] Primary key fingerprint: 9317 E5A6 3734 3024 1C97 DF9F 2EA4 0444 A0E6 8E8B $ gpg --verify IMG_20170307_143742.jpg.proof.csv.asc gpg: assuming signed data in 'IMG_20170307_143742.jpg.proof.csv' gpg: Signature made Tue Mar 7 14:37:56 2017 CET gpg: using RSA key 2EA40444A0E68E8B gpg: Good signature from "noone@proofmode.witness.org" [unknown] Primary key fingerprint: 9317 E5A6 3734 3024 1C97 DF9F 2EA4 0444 A0E6 8E8B

This proves that the files and their contents were signed by the given key and that their contents haven’t been modified. It also works for video.

Why it can’t work

I get the general idea, but Proof Mode only seems to think about three involved parties:

- The activist trying to prove that something has happened

- The media trying to prove to the general public that the data sent in by the activist is authentic

- An attacker (the government etc.) trying to disprove that the data is authentic

But this leaves out the following attack vectors:

- A malevolent activist trying to pass fake data as authentic (happens all the time)

- Malevolent media trying to pass fake data as authentic (happens all the time, this is daily business for all the “Fake News” websites out there)

- An attacker trying to frame the activist by generating a proof for data which the activist has never recorded

- An attacker simply taking the device away or destroying it, so they key pair no longer exists and parts of the sensor data (MAC addresses, device ID) can no longer be checked

- An attacker trying to destroy the trust in the whole proof system

The problem with proving something is that there has to be a full chain of trust from the beginning to the end. This is incredibly hard to do. You basically need the whole device to be a certified, tamper-proof box, and this is how professional systems are built. Police Body cameras for example are black boxes, you can only turn them on or off and download the data, but nothing else, and many do implement additional hardware security measures to prevent and detect tampering. High-End Nikon and Canon cameras also offer image authentication mechanisms built right into the hardware to detect manipulation. Many publishing companies now require photographers to follow strict guidelines after some major hoaxes slipped through, but even the Nikon Image Authentication System has its flaws.

Proof Mode doesn’t prove anything. All data sources, from the camera picture to the location data, can easily be faked. You sign your own data. No third party every gets to see the data, the only person vouching for its authenticity is yourself. If Proof Mode made it possible to easily generate a proof for fake data, then all trust in the system would vanish. And Proof Mode offers plenty of ways to generate fake proofs.

Attack 1: The app signs everything

The Proof Mode app doesn’t records pictures and videos by itself, it just signs other applications’ data. Looking through the code I noticed that it doesn’t even react on an actual camera event (Android has ACTION_IMAGE_CAPTURE for this), but just watches for changes in the standard camera data folders (the DCIM folder both on internal and external media) every ten seconds and signs everything that looks like an image or video file.

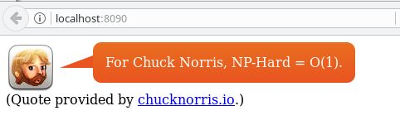

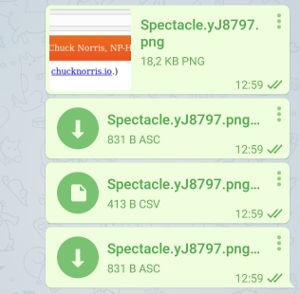

So I just connected the phone to my laptop via an USB cable, copied this Chuck Norris fact into the DCIM folder and a couple of seconds later I had a “proof” for it.

Proof Mode just created a proof that this picture was recorded right now on this device at the current location. In reality it was screen-captured days ago somewhere else. A malevolent activist can thus easily pretend a manipulated picture was taken at their current time and location. Or an attacker can get a hold of the device, put manipulated files on it and send proofs to other people (there’s no password for the private key, remember?), questioning the credibility of the activist and the whole system later. The EXIF data in the picture just has to match the metadata in the CSV file, that’s all.

Attack 2: Extract the private key

You can easily extract the private PGP key from the device, either if the device has been rooted (which is a common thing to do) or using one of the many tools available to law enforcement. Maybe the activist had to install a special Android ROM or root the device to be able to use a VPN and circumvent government censorship, so having a modified device is not uncommon by any means. You can then just import the PGP key into your favourite command line tool and create all the proofs you want.

Oh, the private key the app generates actually has a password. But it’s hardcoded:

private final static String password = "password";

Attack 3: You don’t even need the app

If you’ve had a close look at the verification step at the beginning of this post, you might have noticed that the public key is just a random 4096 bit key and the user ID is always set to “noone@proofmode.witness.org”:

$ gpg --list-packets public.key

# off=0 ctb=99 tag=6 hlen=3 plen=525

:public key packet:

version 4, algo 3, created 1488887810, expires 0

pkey[0]: [4096 bits]

pkey[1]: [17 bits]

keyid: 2EA40444A0E68E8B

# off=528 ctb=b4 tag=13 hlen=2 plen=27

:user ID packet: "noone@proofmode.witness.org"

# off=557 ctb=89 tag=2 hlen=3 plen=558

:signature packet: algo 3, keyid 2EA40444A0E68E8B

version 4, created 1488887819, md5len 0, sigclass 0x13

digest algo 2, begin of digest 34 2a

hashed subpkt 2 len 4 (sig created 2017-03-07)

hashed subpkt 27 len 1 (key flags: 83)

hashed subpkt 11 len 3 (pref-sym-algos: 9 8 7)

hashed subpkt 21 len 5 (pref-hash-algos: 8 2 9 10 11)

hashed subpkt 30 len 1 (features: 01)

subpkt 16 len 8 (issuer key ID 2EA40444A0E68E8B)

data: [4096 bits]

# off=1118 ctb=b9 tag=14 hlen=3 plen=525

:public sub key packet:

version 4, algo 2, created 1488887819, expires 0

pkey[0]: [4096 bits]

pkey[1]: [17 bits]

keyid: CCB54BC9E45BE84F

# off=1646 ctb=89 tag=2 hlen=3 plen=543

:signature packet: algo 3, keyid 2EA40444A0E68E8B

version 4, created 1488887819, md5len 0, sigclass 0x18

digest algo 2, begin of digest d4 5a

hashed subpkt 2 len 4 (sig created 2017-03-07)

hashed subpkt 27 len 1 (key flags: 0C)

subpkt 16 len 8 (issuer key ID 2EA40444A0E68E8B)

data: [4096 bits]

You might suspect where this is going to. Just take your favourite PGP application, create a key with identical properties and fake all the proofs you want. If someone comes later and wants the keys and the device to check the proof, just say it was taken from you at a police control when you left the area. Losing the device was on my list of expected attack vectors, right?

Can it be fixed?

In my opinion: No. There’s just no way a smartphone with an open operating system could ever be used for the creation of reliable proofs if the person creating the footage, the device owner, the holder of the keys and the one vouching for the security of the device are all the same person.